Hatchet vs Temporal

Hatchet is a Temporal alternative that offers simplicity as you're getting started, and fully-featured durable execution as you scale.

Overview

Hatchet and Temporal are both platforms that enable developers to write durable workflows. These are workflows whose intermediate state is persisted, which means that if your worker crashes or fails halfway through a workflow, it automatically picks up where it left off. This can be particularly useful for AI agents, long-running jobs, or business-critical workflows. For example, here's the same workflow, implemented in both Temporal and Hatchet (this workflow is implemented in Python, but both platforms support many different SDK languages):

Show full code

However, there are some notable differences:

-

Hatchet's approach is batteries-included: it provides a full observability layer, including an OpenTelemetry collector and log sink, alerting, a fully-featured UI, pub/sub and streaming support, and deep integrations for modern programming tools like Claude Code and Cursor.

-

Hatchet is much more flexible in how you build tasks and workflows. Hatchet lets you easily run low-latency, highly scalable one-off tasks along with more complex workflows when you need them. So while Hatchet has all the same features for durable execution, they are not the only type of workload supported on the platform.

-

Hatchet has a larger feature set for scaling your workload, such as multiple fairness strategies, rate limiting, and routing support.

Hatchet strikes the rare balance between reliability and flexibility — giving you durable, fault-tolerant task execution without locking you into rigid workflows.

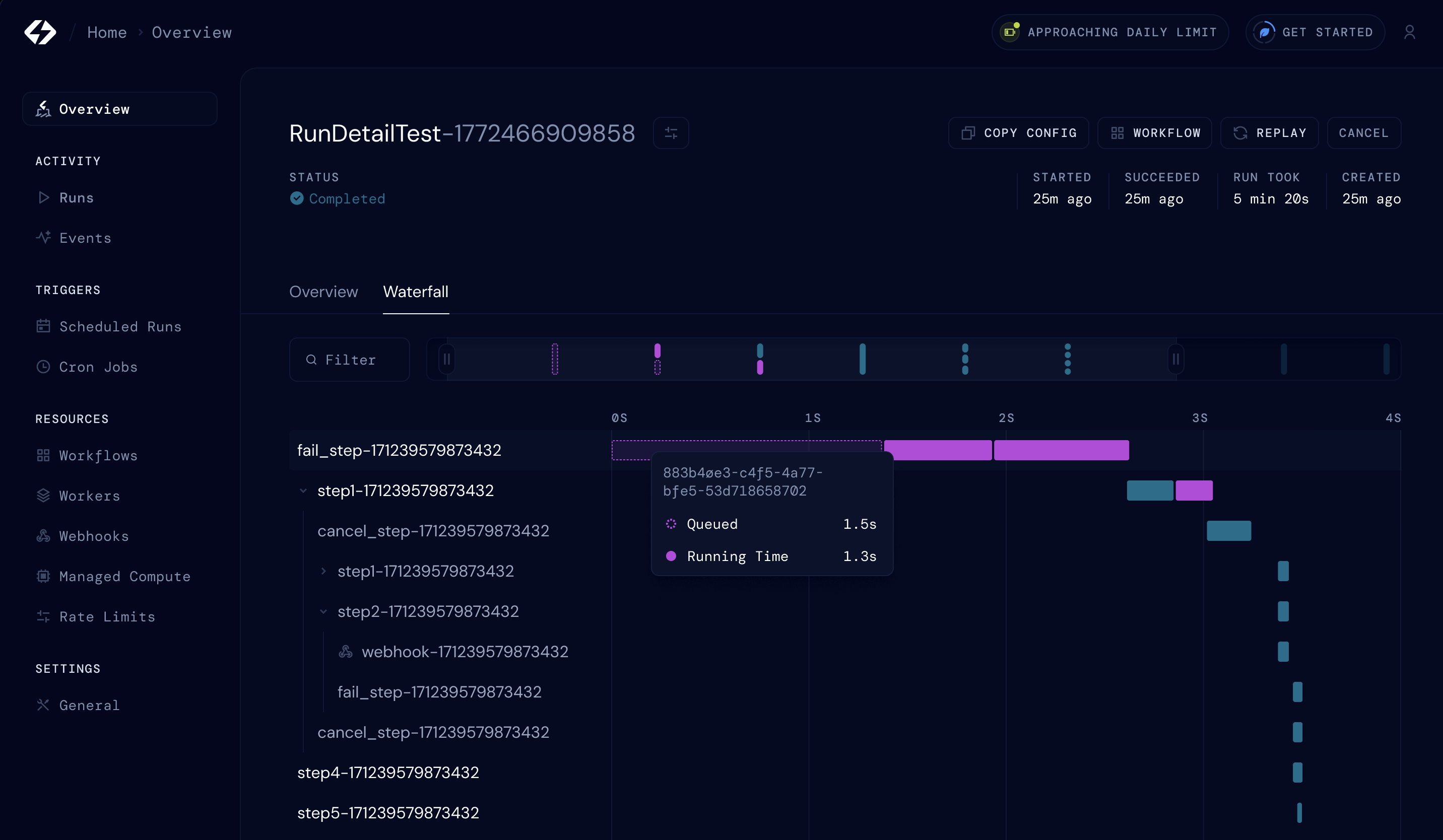

Observability

Hatchet aims to make it as easy as possible to answer the question: why did my workflow fail? To answer this question, developers need contextual logging, tracing and observability for every task which runs in the system. As a result, Hatchet bundles a log ingestor and OpenTelemetry collector by default, rather than requiring developers to spend weeks setting up integrations with third-party providers (although instrumenting these yourself is very much possible, see here).

Flexibility

The most common starting point for a background job is to run a one-off task, such as sending a welcome email to a new user. In many applications, these types of jobs are the majority of your background processing. Hatchet makes this really simple by providing tasks as a top-level primitive.

However, with Temporal, these types of single-step or two-step workflows require you to write a Temporal workflow, which is overkill for simple queue use-cases. Beyond being overkill, durable execution comes with tradeoffs: it imposes strict limits on how you version and update your workflows. For more information, see our guide on how to think about durable execution.

Massively Parallel Workloads

For use-cases like large-scale data ingestion, you often need to run a large number of tasks in parallel. Hatchet is optimized for this type of workload: in particular, you can configure per-worker slot control so workers only accept the amount of work that they can handle. Additionally, features like rate limiting, multiple fairness strategies, and routing support allow you to distribute load among workers to process large batches of data.

Unlike Temporal, Hatchet does not place any limit on the total number of spawned tasks from a durable workflow. In Temporal, this limit is set to 51,200.

Full comparison

Hatchet aims to provide a full set of tools for background processing, which includes but is not limited to durable execution. Here's a full list of features for comparison: