Hatchet vs Celery

Hatchet is a Celery alternative that preserves the simplicity of distributed tasks while adding durable workflows, built-in observability, operations-ready controls, and sane defaults as you scale.

Overview

Hatchet and Celery are both open-source tools for Python stacks to run background tasks and other asynchronous processing workloads. Celery is a popular distributed task queue library that integrates into many Python frameworks and supports connecting to Redis and RabbitMQ as brokers.

On the other hand, Hatchet is a feature-rich platform that provides a “batteries-included” approach to background task orchestration, including observability, a durable execution layer for tasks, logging, alerting, and much more.

Developers and engineering teams who choose Hatchet over Celery consistently cite a few reasons:

-

Native asyncio and modern Python support. Hatchet supports async tasks natively, a significant gap in Celery given how common it is to call LLMs via long-lived API calls.

-

Global rate limiting and concurrency control are built into the Hatchet orchestration layer, not dependent on broker configuration.

-

A managed SaaS offering, which means you don’t need to add a broker like Redis to your stack, whereas you’d need to add it for Celery. Many engineering teams also find Hatchet’s engineering support offering helpful as they scale.

-

Access to a suite of additional platform features, including concurrency, rate limiting, lifespans, dependency injections, first-class DAGs and child spawning, durable execution, and much more.

We’ve struggled with a few async task queue solutions that didn’t quite work for us one way or another and Hatchet has offered the best solution. Very easy to set up and get going.

Andrew LawsonFounderProhostAI

Andrew LawsonFounderProhostAIA closer look

Observability

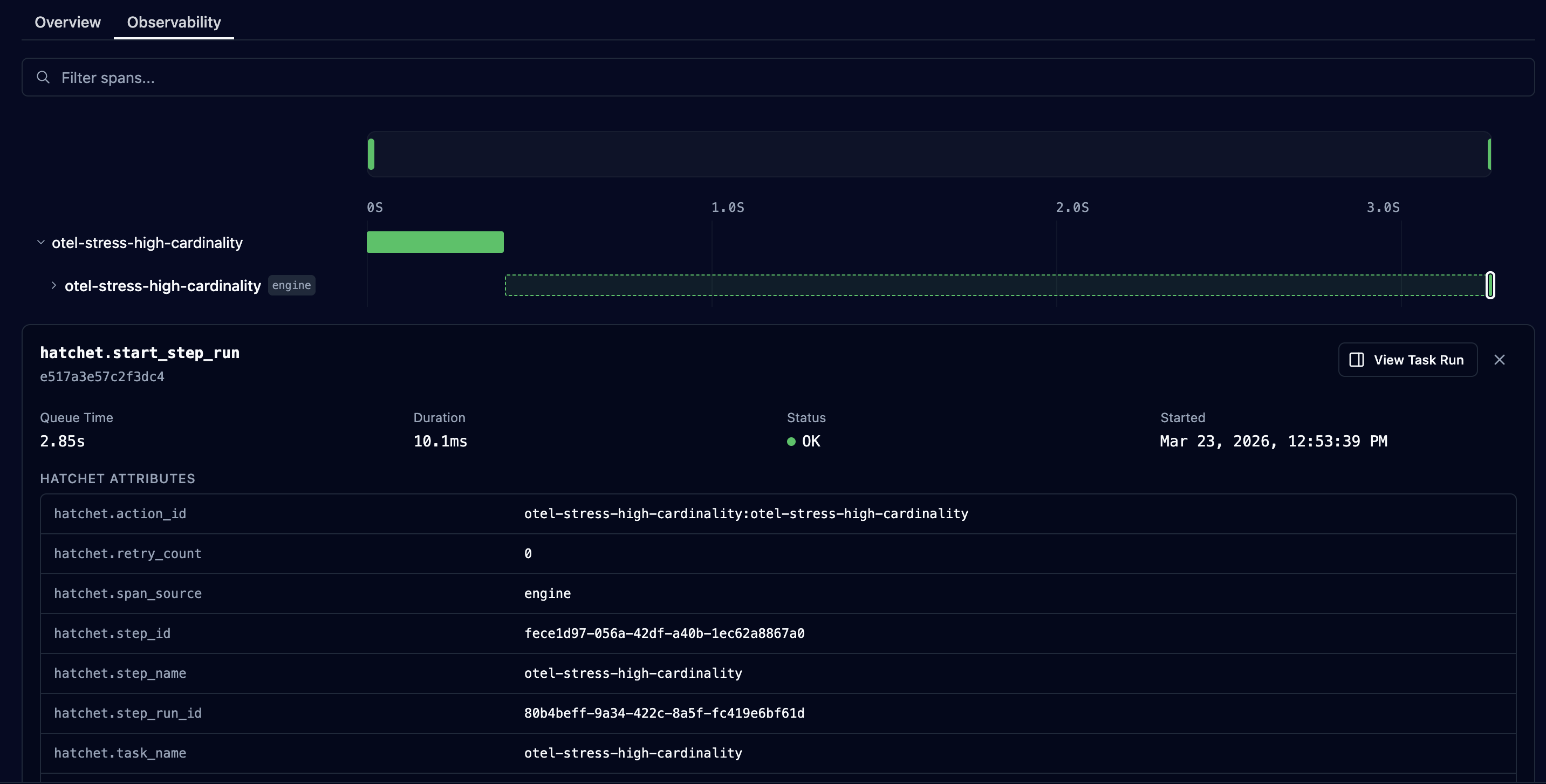

Hatchet aims to make it as easy as possible for you to answer the question: why did my workflow fail? The most common Celery monitoring options (Flower and a Prometheus → Grafana configuration) are both flawed. Flower works by listening for celery.events and persisting them to an in-memory store, but does not support reading tasks from a Celery backend. At best, you’ll be storing duplicated task data across two data sources. At worst, you’ll miss Celery tasks when Flower goes down or restarts. A Prometheus → Grafana setup can answer aggregate questions (e.g. which tasks failed in a certain time range, when did latency spike) but once you identify a failure, drilling down into the actual failure reason is difficult: the metrics exporter won’t give you details on kwargs or results.

Hatchet bundles a log ingestor and OpenTelemetry collector by default, rather than requiring you to spend time setting up integrations with third-party providers. It also supports Slack and email-based alerts on failure, and provides a feature-rich UI for debugging and filtering your tasks, so those patterns live in the platform rather than in dashboards and alerting rules that you have to build and maintain yourself.

Asyncio and modern Python

Unlike Hatchet, Celery doesn’t support running async functions as tasks out of the box and doesn’t allow created tasks to be awaited. This becomes a significant problem for developers using Celery for I/O-bound tasks that would benefit from asyncio. Given that a very common use case today is to call external LLMs via a long-lived API call, the lack of asyncio support in Celery is a major drawback.

Hatchet takes inspiration from FastAPI to make writing tasks feel very natural to developers who have worked with FastAPI or other async Python frameworks before. See the quickstart to get up and running in minutes.

Flexibility

The most common starting point for a background job is to run a one-off task, such as sending a welcome email to a new user. Celery makes this easy with @shared_task and .delay() or .apply_async(). When you need durable multi-step logic (where a worker crash mid-run resumes safely without a fragile hand-rolled state), you typically add idempotency, external stores, or another orchestration layer.

Hatchet treats durable tasks and workflows as first-class primitives so those patterns live in the platform, not only in your application code. The reason Hatchet can avoid certain classes of problems is that it has a central component (the “engine”) along with a Postgres database for result persistence, which makes it much easier to implement global rate limits, worker management, and lets it persist ETA tasks instead of having to pull them off the queue immediately. For more background, see our guide on how to think about durable execution.

Global rate limiting and concurrency control

As soon as you have a pool of workers, a common pattern is to enforce a global rate limit across all workers. In Celery, only rate limits per worker or per task are supported, it’s not possible to set a global rate limit. This is a critical gap when each of your tasks is calling an external API that enforces a rate limit across all requests.

Beyond that, it isn’t possible in Celery to set a concurrency limit on tasks themselves, which means there’s a high risk of unfair task assignment between queues. For example: if User A enqueues 100 long-running tasks and a short while later User B enqueues 1 long-running task, all workers will have picked up User A’s tasks, entirely crowding out User B.

Hatchet builds global rate limiting, multiple concurrency strategies (including GROUP_ROUND_ROBIN for fair concurrency workloads), and task priority into the orchestration layer.

Massively Parallel Workloads

For use cases like large-scale data ingestion, you often need to run a large number of tasks in parallel. Hatchet is optimized for this type of workload: you can configure per-worker slot control so workers only accept the amount of work they can handle. Features like rate limiting, multiple concurrency, and routing support allow you to distribute load among workers to process large batches of data. Child spawning and DAG-based execution support unlimited fan-out.

Celery can move a lot of work through workers and your broker, but global fairness, tenant-aware rate limits, and admission control usually require extra infrastructure and operational tuning. Hatchet builds those primitives into the orchestration layer.